Tool or Teacher? Looking at AI in the Classroom

10 FAQs for you in the classroom

Finding answers to

Common Questions About AI in Education

We’ve compiled a list of common queries for you.

Submit a question

-

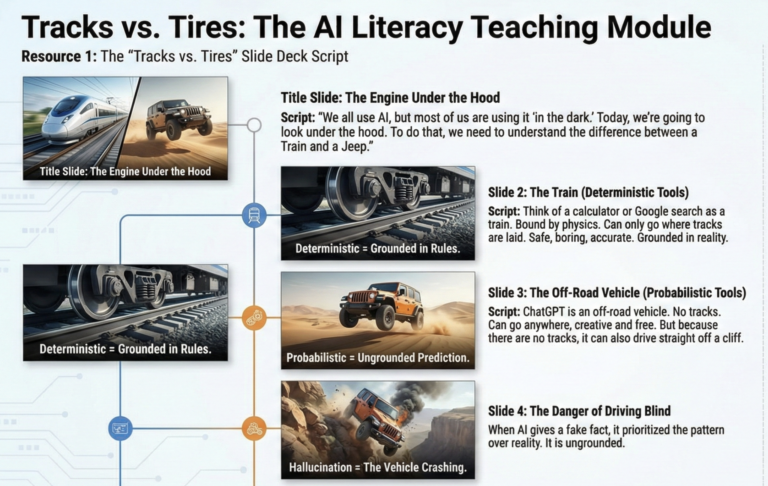

Taming the Wild Engine: How to Teach the Difference Between “Rule-Followers” and “Pattern-Matchers”

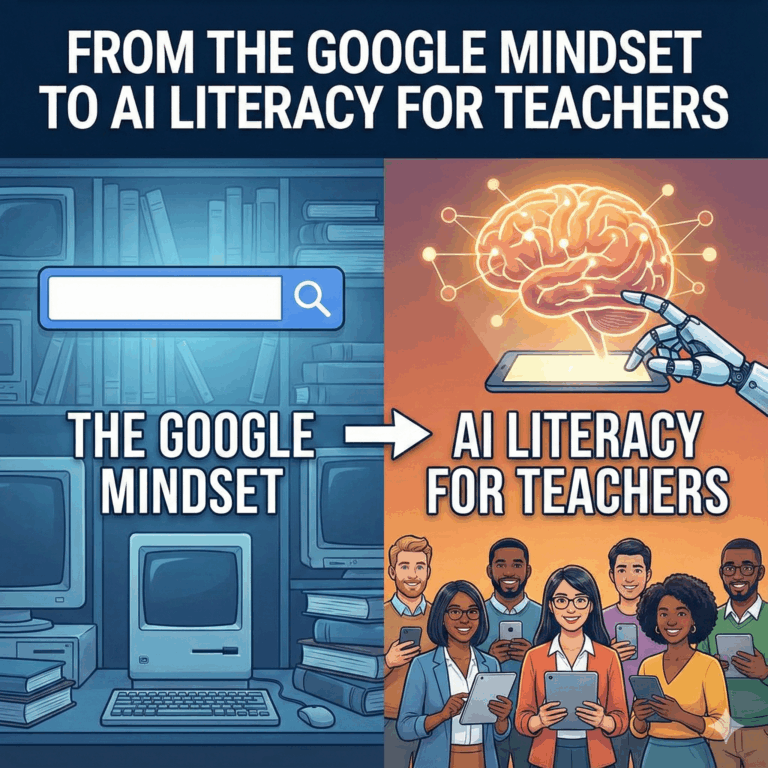

By: Kori Ashton When students use AI, they often think they are using a smarter version of Google – I reference this as the Google Mindset. They ask a question,…

-

Exposing the Ghost: Why AI is a Probabilistic Tool, Not a Truth Machine

If you are a teacher in Texas right now, you have likely experienced the “hallucination” phenomenon firsthand. You’re grading a well-written paper where the syntax is smooth and the vocabulary…

-

Happy 3rd Birthday, ChatGPT: Turning a Wicked Problem into a Needed Disruption in Education

On November 30, 2022, OpenAI released a research preview of ChatGPT. In three years, it has gone from curiosity to infrastructure, and in education it now looks a lot like…

-

Beyond the Ban: Why We Need Frameworks for Student AI Use

The debate over Artificial Intelligence in the classroom has shifted. Two years ago, the conversation was dominated by panic—fears of plagiarism, the death of the essay, and the erosion of…

-

From the “Google Mindset” to AI Literacy

Research based on latest news: The sources directly support and expand on a growing concern in education: the rapid deployment of AI tools is outpacing the development of AI literacy….

-

“Back to Pen and Paper” isn’t about Paper – It’s about where we place the struggle.

Across social media, a familiar pattern is emerging from teachers: as soon as generative AI shows up in student writing, many instructors move everything “back to pen and paper.” Homework…

-

Prompt, Probe, Prove: Helping Students Be Smarter Than the Model

AI is already woven into everyday learning. Recent surveys suggest that more than 90% of undergraduates in some contexts now use generative AI tools, often across the full spectrum of…

-

AI in the Classroom: Tool, Teacher… or Teammate?

UNESCO recently hosted a Campus Masterclass called “AI in the classroom: tool or teacher?” aimed at educators around the world. The session is part of the UNESCO Campus programme, which…

-

A Professor’s Guide to Teaching with AI: From Search Engine to Thinking Partner

Video generated by NotebookLM Below is the video’s transcript which was fully generated by NotebookLM from human-provided resources An LLM Doesn’t “Think” What if the biggest threat to modern education…