AI is already woven into everyday learning. Recent surveys suggest that more than 90% of undergraduates in some contexts now use generative AI tools, often across the full spectrum of study tasks—from summarizing readings to drafting assignments. At the same time, researchers warn that if we let AI automate too much of the work of learning, we risk hollowing out exactly the skills students need in an AI-saturated world: critical discernment, verification, and analytical reasoning.

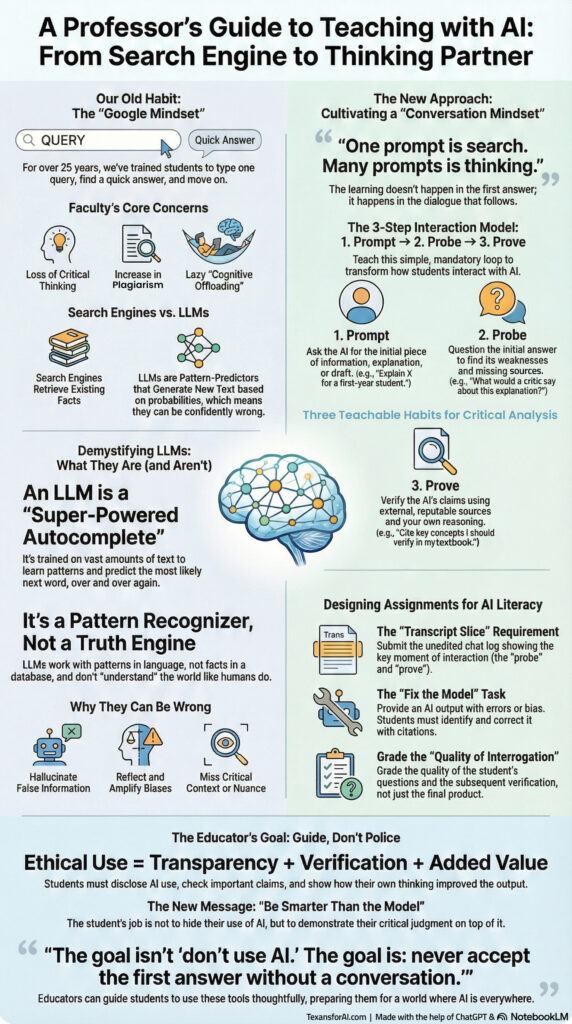

Under our Keep Education Intelligent initiative, Kori Ashton, MA—currently pursuing her doctorate at Johns Hopkins School of Education—proposes a simple teaching frame for this problem: Prompt, Probe, Prove. Instead of treating AI like a search engine that returns a single “right” answer, Ashton’s model trains students to use large language models (LLMs) as thinking partners.

The core message is straightforward:

One prompt is search. Many prompts is thinking.

Below is how the three-step framework lines up with emerging research on AI, pedagogy, and critical thinking.

Why a 3-Step Interaction Model?

Global research on AI in higher education shows two consistent patterns:

- Students mainly use AI to search for information or resolve doubts, while many professors use it for ideation, materials preparation, and research support.

- When faculty are trained and actively use AI, they design more effective activities for critical-thinking assessment and self-regulation, especially when those activities combine AI output with mandatory human review.

In other words, AI isn’t automatically transformative. It becomes powerful when teaching is designed so that students must question, refine, and verify what the model produces.

Tom Chatfield’s AI and the Future of Pedagogy makes a similar point: the best uses of AI keep learners actively constructing knowledge, managing cognitive load, and practicing metacognition—thinking about their own thinking—rather than outsourcing effort to the machine.

Ashton’s Prompt–Probe–Prove model is one way to operationalize that research in day-to-day classroom work.

Step 1: Prompt — Make the Question Do More Work

The first step is Prompt: students ask AI for an initial explanation, outline, or draft—but they are required to craft a high-quality prompt, not a quick query.

This is grounded in growing evidence that prompt design can itself be a form of critical thinking. Chatfield notes that teaching students to design prompts carefully forces them to clarify goals, constraints, and context—skills that align closely with precision in academic writing and reasoning.

In practice, Ashton’s framework asks students to include three elements in their first prompt:

- Role and audience – e.g., “Explain this concept for a first-year biology student.”

- Task and constraints – e.g., word limits, reading level, or required structure.

- Context – prior knowledge, specific course concepts, or dataset boundaries.

By doing this, the “prompt step” becomes a low-stakes way to practice goal-setting, audience awareness, and metacognitive planning—all of which are well-supported by instructional research as contributors to effective learning.

The output from this first prompt is not treated as finished work. It is raw material for the next two steps.

Step 2: Probe — Turn Answers into a Conversation

The second step is Probe: students are required to interrogate the model’s first answer through multiple follow-up prompts.

This aligns with two strands of current evidence:

- A global case study from Spain used AI-enhanced activities where students had to generate with AI and then analyze, reflect, and edit that output themselves. This design treated AI as cognitive scaffolding and led to measurable gains in critical reasoning, ethical reflection, and self-regulation.

- Chatfield highlights that effective AI-supported learning environments deliberately structure tasks around judgment, requiring uniquely human contributions—such as critiquing AI interpretations or identifying hallucinated citations.

In Ashton’s model, students must ask probing questions such as:

- “What would a critic of this explanation say?”

- “Show me three alternative viewpoints from different theoretical traditions.”

- “Where is the model most uncertain, and what data is it assuming?”

The goal is to grade the quality of interrogation, not just the quality of the final paragraph. This mirrors Chatfield’s call to redesign assessment as a conversation about how students think with and about AI, rather than a surveillance system that simply checks if they avoided cheating.

When students learn to probe AI this way, they practice:

- spotting gaps and bias in generated content,

- comparing competing explanations,

- and articulating why one answer is more plausible than another.

Those are exactly the kinds of “human-in-the-loop” skills that global AI-in-higher-ed research identifies as essential for both academic success and workforce readiness.

Step 3: Prove — Verify with Evidence and Reasoning

The final step is Prove: students must check AI-generated claims against external sources and their own reasoning, and then document how their thinking changed.

The WISE–IIE Global Consortium report recommends a dual model—“generate with AI, critically review with humans”—as a guiding template for university teaching. In the Spanish action-research study, this human verification layer was supported by common ethics rubrics and explicit instruction in verification and templates for checking AI output.

Ashton’s Prove step puts that recommendation into a simple, repeatable pattern:

- Source check

Students must identify at least two external, reputable sources (textbook, peer-reviewed article, or vetted reference) that confirm, complicate, or contradict key claims from the AI response. - Reasoned judgment

They then write a short reflection explaining where the AI answer was solid, where it was weak or biased, and how they resolved discrepancies. - Process evidence

Students submit a brief “transcript slice” (a trimmed section of their AI chat) along with their final work, making their prompts, probes, and verification steps visible for feedback.

This echoes emerging practice around portfolio-based, process-focused assessment, where students document their learning journey while AI helps surface patterns—but where reflection and curation remain “irreducibly human.”

The Prove step also lines up with broader policy recommendations that call on universities to clarify authorship, academic integrity, and acceptable AI co-creation rather than simply banning tools.

What This Looks Like in a Course

In a first-year seminar, a typical Prompt–Probe–Prove Assignment might look like this:

- Prompt (20%)

Students submit their initial AI prompt and the model’s first answer. Grading focuses on clarity of the prompt: role, audience, task, and context. - Probe (40%)

Students add 3–5 follow-up prompts that challenge, deepen, or reframe the answer, along with short annotations explaining why they asked each question. This is where instructors evaluate the quality of interrogation. - Prove (40%)

Students write a short essay or explanation in their own words, citing external sources and explicitly noting where they agreed or disagreed with the model. They attach a transcript slice as process evidence.

This kind of design is consistent with the global recommendation to treat AI as cognitive scaffolding, not as a replacement for student thinking—and to integrate AI use into curricula in ways that highlight ethics, verification, and critical reflection.

From Policing to Guiding

Both the WISE–IIE global report and Chatfield’s white paper converge on a key message: the real risk is not that students use AI, but that they use it uncritically.

Ashton’s Prompt–Probe–Prove framework offers a concrete way for educators to respond:

- It acknowledges that AI use is now normalized.

- It raises the bar by centering judgment, verification, and metacognition.

- It shifts assessment from “Did you use AI?” to “How did you think with it?”

Within the Keep Education Intelligent initiative, this model positions educators not as AI police, but as guides who help students become “smarter than the model”—able to use powerful tools without surrendering the very human work of learning.